Episode 2: Let's meet - AI Engineering Chapter 1

A Study Group Exploring Chip Huyen’s AI Engineering Book

We’re in the spotlight, and the pressure’s on. The eyes of the club are on me, and I’ve got to convince them that I’m the best person to lead the AI Team!

Let’s establish a goal: Convince the Club that they need me and that I am the best person to lead the team's AI initiatives (what a goal!).

The meeting structure is going to have three parts:

Understand uses cases with practical examples: We’ll dive into how AI can be a game-changer in areas like writing, education, data aggregation, and workflow automation.

The planning phase and implementation challenges: Because let’s face it, nothing ever goes according to plan. But that’s where the fun is, right?

The AI Engineering stack, tools, and infrastructure: Basically, I’m going to need a lot of coffee and a ton of computational power to pull this off. (And maybe some fancy tools, too.)

Use cases: Where AI Shines (or at least, Where It Should)

AI offers fields in which it can take advantage, from writing, education, data aggregation, and workflow automation.

At Liverpool FC, we’re drowning in data—22 players, every match, a treasure trove of information that’s mostly sitting idle. It’s like having a gold mine and only digging with a teaspoon.

Take, for example, the challenge of integrating new players. We’ve all seen big-name signings struggle to find their footing. But what if we could personalize their training? Imagine a world where each player has a bespoke learning path, tailored to their strengths and weaknesses. We want players who can adapt and be aware of the challenges of each position with a dedicated program to cover those needs based on their previous matches this will boost our performance in every competition.

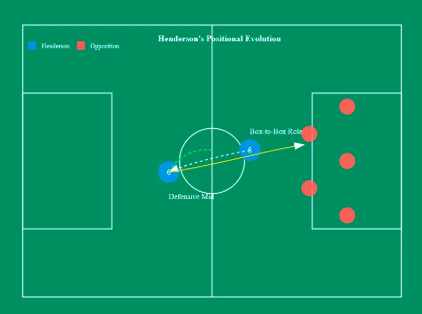

Remember when Jordan Henderson switched from a box-to-box midfielder to a deeper-lying defensive role? It wasn’t exactly smooth sailing at first. But with targeted training, he mastered the art of scanning the field and adapting to low-block defences. The result? A versatile midfielder who can switch roles that lead him to solve the adaptation challenges.

We can think on writing reports aggregating data from different sources to get a report that is able to condense the information available in simple terms, with adaptation for every role in the club.

Planning: What does success look like?

This is a critical question to set our path to success (the eyes can only see what the mind understands).

Returning to our idea of helping players in training and position on the pitch, we can define success as reducing the number of players who struggle to adapt to new roles. Intervention results provide a plan to follow. At first, it might be difficult to convince players, managers, and staff that it works, meaning that interpretability is necessary.

AI Engineering stack

Application development: Provide models with a good prompt and enough context, this layer requires rigorous evaluation and a good interface.

Model development: Modeling and training, fine-tuning, and interface optimization, also require dataset engineering, and it also requires rigorous evaluation.

Infrastructure: Model serving, managing data, and compute, and monitoring

As an AI Engineer, I’ll focus less on modeling and training, and more on model adaptation, as efficient training and inference optimization with experience with GPUs and big clusters.

Model adaptation can be divided into two categories:

Prompt-based techniques: You adapt a model by giving it instructions and context instead of changing the model itself. However, prompt engineering might not be enough for complex tasks or applications with strict performance requirements.

Finetuning: finetuning techniques are more complicated and require more data, but they can improve your model’s quality, latency, and cost significantly.

If we are thinking of getting a project done using foundational models as the base it is important to understand the difference and the set of skills needed for success:

ML Knowledge is a nice to have, not a must to have (this can be discussed but overall you don’t need to understand gradient descent to have a good product with foundational models)

Dataset engineering (Less about feature engineering and more about data deduplication, tokenization, context retrieval, and quality control)

Inference optimization is a critical component to build on foundational models as waiting time is related to losing attention and even good decisions at the wrong time.

A significant shift we need to perform is the ability to turn ideas into demos, get feedback, and iterate. Full-stack engineers have an advantage here, as they think of the process from the user product and then the backend, this change in how we approach things can help to build the product first and only invest in data and models once the product shows promise.

We got a call from the manager saying:

I like the approach you took, it was technical but you made a concrete example that help the staff and the data team understand where are you coming from, people are excited and they have many expectations, big expectations on what you can achieve.

However, we have some concerns about your position, not the staff but the ML team, they are engineers with over 20 years of experience that worked in top companies from all over the world and they have reservations on how foundational models work and how YOU, specifically, can work with that and are planning to use the data. They asked me to sent you some structure for the next meeting and this is what they want to discuss

Now we have pressure, I haven’t met this ML Team and they already have concerns about what I said, after all, I am saying you don’t need the core ML knowledge and the front end is more important than gradient descent, but let’s break down how are we going to convince them:

Next week plan

Training Data (pp. 50–56):

What kind of data does Liverpool FC have, and how can we use it to train or adapt foundation models?

How can we ensure the data is high-quality and relevant to our use cases (e.g., player performance analysis)?

Modeling (pp. 58–78):

What model architecture and size would be best suited for our application, and why?

How can we balance performance, cost, and latency when choosing a model?

Post-Training (pp. 78–88):

How can supervised fine-tuning help us adapt a foundation model to Liverpool FC’s specific needs?

What role does preference finetuning play in aligning the model with the preferences of coaches and players?

Sampling (pp. 88–96):

How can we use sampling strategies to improve the quality and consistency of the model’s outputs?

What challenges might we face due to the probabilistic nature of AI, and how can we address them?

Probabilistic Nature (pp. 105–111):

How can we design workflows that account for the uncertainty in AI outputs?

What strategies can we use to minimize the impact of hallucinations or inconsistent outputs?

We’re in this together, and with a bit of luck, a lot of coffee, and Chapter 2 of Chip’s Book, we’ll make it work!

Read the next Chapter